2.3 Cybernetics

It's difficult to sum up the goals and inspirations of Cybernetics in a single neat word or phrase. Historians of science acknowledge the mathematician and physicist Norbert Wiener (1894–1964) as the intellectual father of the field. It was he who coined the term 'cybernetics' for his new thinking – the word first appears in Plato in the sense of 'the art of navigation' and was also used by the Enlightenment scientist Andre-Marie Ampere to mean 'the science of government'. During the Second World War, Wiener had worked in gunnery control, on a device that would automatically track an enemy aircraft, predict its path across the sky and keep an anti-aircraft gun continuously aimed at it. Although the machine was never fully constructed, Wiener gained an important insight from it. It was clear to him that such a device was not a simple automaton, like Vaucanson's creations; unlike them, in a very rudimentary way it seemed to be acting purposefully, as if it had agency. How was this possible? Wiener wrote afterwards:

I came to the conclusion that an extremely important factor in voluntary activity is what control engineers term feedback... . It is enough to say here that when we desire a motion to follow a given pattern, the difference between this pattern and the actually performed motion is used as a new input to cause the part regulated to move in such a way as to bring its motion closer to that given by the pattern ....

The concept of feedback is so central to Cybernetics and to new trends in artificial intelligence that we should dwell on it for a moment.

Exercise 4

Try to come up with your own definition of feedback. Use a dictionary, or search the Web if you want, but use your own words as far as possible.

Comment

Most definitions seem to agree on the central idea that feedback is a process in which all or part of the output of a system is passed back to become its input. However, this seems to me to miss something of what Wiener was trying to say. I'll return to this point shortly.

The use of feedback as a means of control had been known for some time. A classic example, quoted in most textbooks, is the steam governor. Eighteenth-century engineers working with steam engines were faced with the problem of controlling the flow of steam that determined the speed of an engine. If too much steam entered its cylinders it would turn too fast, and might possibly break down under the strain. If too little entered, then it would run too slowly. The aim was to keep the engine running at a constant speed, by continuously monitoring the rate at which it was turning, and opening or closing a valve to increase or diminish the inward flow of steam, as required.

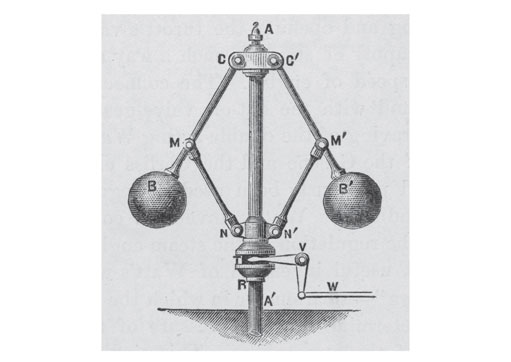

Of course, high-speed electronic monitoring technology was unknown at the time, so at first this seemed an intractable problem. However, in 1787 the Scottish engineer James Watt patented a solution that was beautiful in its elegance and simplicity – the centrifugal steam governor (Figure 7).

The device used two heavy balls, mounted on arms that were free to swing inwards or outwards. These arms were connected to a regulator that opened or closed the steam valve, and also to the main drive shaft, so the arm assembly rotated at the same speed as the engine. If the engine started to turn too fast, centrifugal force drove the balls and arms upwards and outwards in wider circles. This caused the steam valve to close, choking off the flow of steam and thus reducing speed. As the engine's speed diminished, the balls lowered, the valve re-opened and more steam was admitted, speeding the engine up again. In practice the device responded instantly to changes in engine speed and was able to preserve a constant rate. The centrifugal governor can still be seen on steam engines. It is a perfect example of negative feedback.

But I think there was slightly more in what Wiener was claiming for his anti-aircraft predictor. At the start of this section I quoted briefly from his comments on the fixed patterns of the automaton – you can take a quick look back at this if you want. Wiener continued this line of thought as follows:

The figures themselves have no trace of communication with the outer world, except in this one-way stage of communication with the established mechanism of the music box. They are blind, deaf and dumb and cannot in any way vary their activity in the least from the conventional pattern.

Now, in the case of the anti-aircraft predictor, where would the feedback come from? Not from anywhere in the device itself, but from the motion of the aircraft across the sky. The machine constantly adjusts its prediction and its aim as it gets fresh feedback information on the actual movements of the aircraft. The main point about this kind of feedback, then, is that it comes from the environment outside the machine. The device is in constant contact with the world around it.

After the war, a group of major talents assembled around the banner of Cybernetics. These included neurophysiologists Warren McCullough and Grey Walter, mathematicians Walter Pitts and John von Neumann, the engineer Julian Bigelow, the psychiatrist William Ross Ashby, and others including anthropologists, physicists and economists. As I claimed above, it's difficult to find a neat paraphrase of the movement's aims. As you can see, Cybernetics was from the start a multidisciplinary project, an abstract study belonging to no particular field. Wiener himself described Cybernetics as:

... a new field in science. It combines under one heading the study of what in a human context is sometimes loosely described as thinking and in engineering is known as control and communication. In other words, cybernetics attempts to find the common elements in the functioning of automatic machines and of the human nervous system, and to develop a theory that will cover the entire field of control and communication in machines and in living organisms.

An ambitious programme indeed. The goal of Cybernetics was to find a complete theoretical account of the mechanisms such as feedback that enable animals (and possibly machines) to act independently and purposefully. It was a study of the machinery of agency and intelligence.

There is no space for a history of Cybernetics here. The group had some successes, in particular McCullough and Pitts' work on the computing capacities of artificial nervous systems. However, it is fair to say that by the late 1950s its star was sinking. It was being challenged by a new and exciting perspective on mechanised thought – AI.