Estimating the likely outcome of the referendum on the UK’s membership of the EU was always going to be a challenge for the opinion polls. In a general election they have years of experience as to what does and does not work on which to draw when estimating the level of support for the various political parties. They still make mistakes, as was evident last year, but at least they can learn from them. In a one-off referendum they have no previous experience on which to draw — and there is certainly no guarantee that what has worked in a general election will prove effective in what is a very different kind of contest.

On Thursday, Nigel Farage appeared to concede defeat based on poll numbers...

On Thursday, Nigel Farage appeared to concede defeat based on poll numbers...

Meanwhile the subject matter of this referendum raised a particular challenge. General elections in the UK are primarily about left and right. The question is whether the government should be doing a little more or doing a little. The social division underlying this debate tends to be between the middle class and the working class.

But this referendum was about something different. With immigration featuring as one of the central issues, it was a division between “social liberals” and “social conservatives”. The former tend to be comfortable with the diversity that comes with immigration, while the latter prefer a society in which people share the same customs and culture. Social liberals were inclined to vote in favour of remaining in the EU, while social conservatives were more inclined to vote to leave.

The principal social division behind this debate is not social class but education. Graduates tend to be social liberals, while those with few, if any, educational qualifications are inclined to be social conservatives. Age also matters too, with younger people tending to be more socially liberal.

Pollsters in the UK have less experience of measuring this dimension of politics. They do not, for example, necessarily collect information on the educational background of their respondents as a matter of routine. Yet any poll that contained too many or two few graduates was certainly at risk of over or underestimating the level of support for staying in the EU.

Meanwhile, we do not know whether there is any reason to anticipate availability bias — that is whether Remain or Leave supporters are easier for pollsters to find than those of the opposite view. Equally, the pollsters have less idea what those who say they don’t know how they are going to vote will eventually do.

Online or on the phone?

The pollsters’ difficulties in estimating referendum vote intentions were all too obvious during the referendum campaign. In particular, polls conducted by phone systematically diverged from those done via the internet in their estimate of the relative strength of the two sides. For much of the campaign, phone polls reckoned that Remain was on 55% and Leave on 45%. The internet polls were scoring the contest at 50% each — a fact that often seemed to be ignored by those who were confident that the Remain side would win. This divergence alone was clear evidence of the potential difficulty of estimating referendum vote intention correctly.

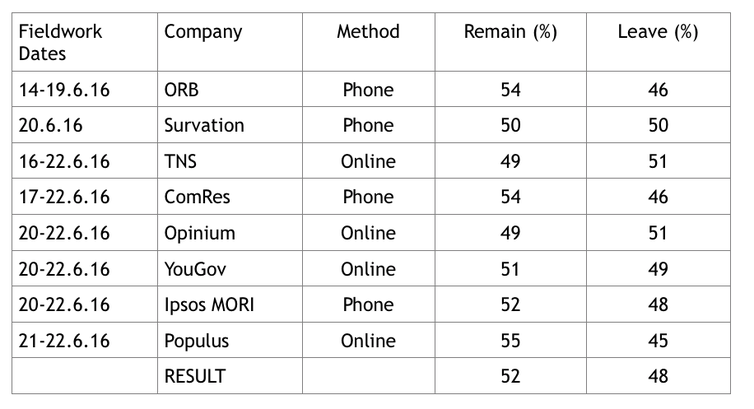

In the event, that difficulty was all too evident when the ballot boxes were eventually opened. Eight polling companies published “final” estimates of referendum voting intention based on interviewing that concluded no more than four days before polling day.

Although two companies did anticipate that Leave would win, and one reckoned the outcome would be a draw, the remaining five companies all put Remain ahead. No company even managed to estimate Leave’s share exactly, let alone underestimate it. In short, the polls (and especially those conducted by phone) collectively underestimated the strength of Leave support.

There is little doubt that the companies are disappointed with this outcome. Some have already issued statements that they will be investigating what went wrong. The British Polling Council has indicated that it will be asking its members to undertake such investigations and may have the findings externally reviewed. It will inevitably take a while before we get to the bottom of what went wrong. However, it is already clear that there is one issue that will be worthy of investigation.

As the pollsters worked out their final estimates of the eventual outcome, many of them made different decisions from those that they had made previously about how to deal with the possible impact of turnout and the eventual choice made by the “don’t knows”. In the event those decisions did not improve their polls’ accuracy.

On average the eight polls between them anticipated that Remain would win with 52%, and Leave would end up with 48%. If all the pollsters had stuck to what they had been doing earlier in the campaign (and Populus did not adjust its figures in the way that it did), the average score of the polls would have been Remain 50%, Leave 50%. In short, at least half of the error in the polls may be a consequence of the decisions that the pollsters made about to how to adjust their final figures. Polling a referendum truly is a tough business.![]()

This article was originally published on The Conversation. Read the original article.

Rate and Review

Rate this article

Review this article

Log into OpenLearn to leave reviews and join in the conversation.

Article reviews