Use 'Print preview' to check the number of pages and printer settings.

Print functionality varies between browsers.

Printable page generated Sunday, 17 May 2026, 3:38 PM

Moodle Quiz (iCMA) reports

1 The Moodle Quiz (iCMA) reports

Very important

It is important to understand that when reviewing a student's performance module team members and tutors may see information that is not visible to students. In particular

- Feedback on a deferred feedback iCMA is not available to students until after the closing date but is available to tutors reviewing a student's attempt.

- All feedback fields are shown in a review even if they are never shown to students.

To experience an iCMA as students experience it module team members and tutors should 'Preview' the iCMA.

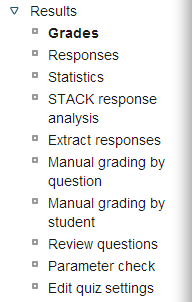

To access the reports click on the name of an iCMA and when it appears select the Results link.

The Results section expands to show a variety of reports. The number of reports that you can see depends on your role. The full extent of the reports is described here.

When reading the reports please remember that students drop out. The number of current individual students listed on the Grades page may be less than the total number of students that have attempted an iCMA as reported on the statistics page.

Grades: Lists students who have interacted with the iCMA together with dates and grades achieved. Students who have made multiple attempts have multiple entries beneath their name. By clicking on a date or a grade it is possible to step through the student's responses to the questions.

Responses: Provides a spreadsheet like representation of all responses.

Statistics: Provides various statistical calculations for the iCMA as a whole and for individual questions within it. These are described in the next section.

STACK response analysis: Provides a detailed breakdown on responses to STACK questions.

Extract responses: Provides student responses in a file which may be submitted to the university's anti-plagiarism systems.

Manual grading by question/student: Enables human markers to mark essay questions.

Review questions: Provides authors with a printable view of the question editing forms for all the questions in an iCMA. This can be used for checking the iCMA prior to the opening date.

Parameter check: Helps authors ensure that their iCMA is correctly configured.

Edit quiz settings: Enables E&A administrators to amend the opening and closing dates without having full iCMA authoring permissions.

The Grades, Responses and Statistics options of the iCMA reports provide details of students' performance on each question together with statistics that enable you to quickly identify problem questions. Problem questions fall into two categories:

- questions that have identified student learning difficulties. Incorrect responses which are repeated by multiple students probably need specific feedback to correct widespread misunderstandings.

- questions that are not coping adequately with student responses. You will need to ensure that your response matching has coped with all the responses that your students have given. Clearly you will wish to ensure that all correct responses have been marked accordingly.

Empirical evidence shows that when you have studied the responses for ~100 students you are likely to have covered the majority of possible responses. For free text responses we recommend studying the responses of 200 students or more.

Nb. For summative iCMAs there are strict controls over when questions can be amended. For formative iCMAs we leave this to module team discretion.

2 Access to student answers

Because the iCMA reports contain personal data we restrict access as follows.

| OU role | VLE 'role' | Access |

| Student | Student | Students can review their own iCMAs. |

| Associate lecturer | Tutor | ALs can review the iCMAs of all students in their tutor group. |

| Module Team | Website updater | Module team members with the Website updater role can review the iCMAs of all students on the module and access the various analyses. |

No other VLE roles provide access to the iCMA reports. If other staff wish to follow results, perhaps to check on how a set of questions is performing, they should obtain the permission of the Module Team chair to be given the Website updater role.

3 The responses report

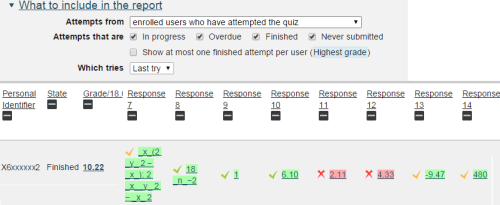

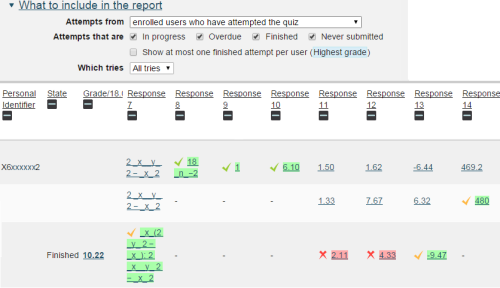

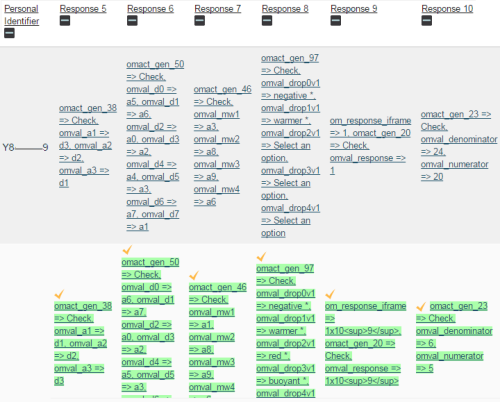

It is possible to extract the last response to a question or, if the test has run in interactive with multiple tries behaviour the responses at all tries. The two figures below show these two views for the same student for the same test.

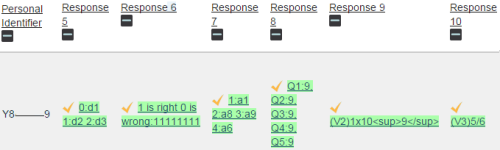

Please be aware that if responses to OpenMark questions are displayed they appear in different forms in the two views.The 'last try' view uses the style specified by the author with getResults().setAnswerLine(responseString). Here response 10 is shown as 5/6.

In contrast the 'all tries' view uses the detail extracted from the form. The 5 and 6 from the second response to question 10 are presented with the names of the fields they were extracted from.

4 The statistics reports

4.1 Test statistics

Very important

These statistics are designed for use with summative iCMAs where students have just one attempt and complete that attempt

Average grade:

For discriminating, deferred feedback, tests aim for between 50% and 75%. Values outside these limits need thinking about. Interactive tests with multiple tries invariably lead to higher averages

Median grade:

Half the students score less than this figure.

Standard deviation:

A measure of the spread of scores about the mean. Aim for values between 12% and 18%. A smaller value suggests that scores are too bunched up.

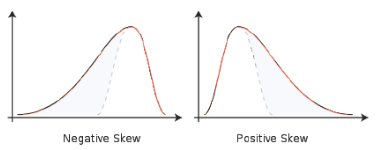

Skewness:

A measure of the asymmetry of the distribution of scores. Zero implies a perfectly symmetrical distribution, positive values a ‘tail’ to the right and negative values a ‘tail’ to the left.

Aim for a value of -1.0. If it is too negative, it may indicate lack of discrimination between students who do better than average. Similarly, a large positive value (greater than 1.0) may indicate a lack of discrimination near the pass fail border.

Kurtosis:

Kurtosis is a measure of the flatness of the distribution. A normal, bell shaped, distribution has a kurtosis of zero. The greater the kurtosis, the more peaked is the distribution, without much of a tail on either side. Aim for a value in the range 0-1. A value greater than 1 may indicate that the test is not discriminating very well between very good or very bad students and those who are average.

Coefficient of internal consistency (CIC):

It is impossible to get internal consistency much above 90%. Anything above 75% is satisfactory. If the value is below 64%, the test as a whole is unsatisfactory and remedial measures should be considered.

A low value indicates either that some of the questions are not very good at discriminating between students of different ability and hence that the differences between total scores owe a good deal to chance or that some of the questions are testing a different quality from the rest and that these two qualities do not correlate well – i.e. the test as a whole is inhomogeneous.

Error ratio (ER):

This is related to CIC according to the following table: it estimates the percentage of the standard deviation which is due to chance effects rather than to genuine differences of ability between students. Values of ER in excess of 50% cannot be regarded as satisfactory: they imply that less than half the standard deviation is due to differences in ability and the rest to chance effects.

| CIC | 100 | 99 | 96 | 91 | 84 | 75 | 64 | 51 |

| ER | 0 | 10 | 20 | 30 | 40 | 50 | 60 | 70 |

Standard error (SE):

This is SD x ER/100. It estimates how much of the SD is due to chance effects and is a measure of the uncertainty in any given student’s score. If the same student took an equivalent iCMA, his or her score could be expected to lie within ±SE of the previous score. The smaller the value of SE the better the iCMA, but it is difficult to get it below 5% or 6%. A value of 8% corresponds to half a grade difference on the University Scale – if the SE exceeds this, it is likely that a substantial proportion of the students will be wrongly graded in the sense that the grades awarded do not accurately indicate their true abilities.

4.2 Question statistics

Facility index (F):

The mean score of students on the item.

| F | Interpretation | 35 - 64 | About right for the average student. |

| 5 or less | Extremely difficult or something wrong with the question. | 66 - 80 | Fairly easy. |

| 6 - 10 | Very difficult. | 81 - 89 | Easy. |

| 11 - 20 | Difficult. | 90 - 94 | Very easy. |

| 20 - 34 | Moderately difficult. | 95 - 100 | Extremely easy. |

Standard deviation (SD):

A measure of the spread of scores about the mean and hence the extent to which the question might discriminate. If F is very high or very low it is impossible for the spread to be large. Note however that a good SD does not automatically ensure good discrimination. A value of SD less than about a third of the question maximum (i.e. 33%) in the table is not generally satisfactory

Random guess score (RGS):

This is the mean score students would be expected to get for a random guess at the question. Random guess scores are only available for questions that use some form of multiple choice. All random guess scores are for deferred feedback only and assume the simplest situation e.g. for multiple response questions students will be told how many an swers are correct.

Values above 40% are unsatisfactory – and show that True/False questions must be used sparsely in summative iCMAs.

Intended weight:

The question weight expressed as a percentage of the overall iCMA score.

Effective weight:

An estimate of the weight the question actually has in contributing to the overall spread of scores. The effective weights should add to 100% - but read on.

The intended weight and effective weight are intended to be compared. If the effective weight is greater than the intended weight it shows the question has a greater share in the spread of scores than may have been intended. If it is less than the intended weight it shows that it is not having as much effect in spreading out the scores as was intended.

The calculation of the effective weight relies on taking the square root of the covariance of the question scores with overall performance. If a question’s scores vary in the opposite way to the overall score, this would indicate that this is a very odd question which is testing something different from the rest. And the computer cannot calculate the effective weights of such questions resulting in warning message boxes being displayed.

Discrimination index:

This is the correlation between the weighted scores on the question and those on the rest of the test. It indicates how effective the question is at sorting out able students from those who are less able. The results should be interpreted as follows

| 50 and above | Very good discrimination |

| 30 – 50 | Adequate discrimination |

| 20 - 29 | Weak discrimination |

| 0 - 19 | Very weak discrimination |

| -ve | Question probably invalid |

Discrimination efficiency:

This statistic attempts to estimate how good the discrimination index is relative to the difficulty of the question.

An item which is very easy or very difficult cannot discriminate between students of different ability, because most of them get the same score on that question. Maximum discrimination requires a facility index in the range 30% - 70% (although such a value is no guarantee of a high discrimination index).

The discrimination efficiency will very rarely approach 100%, but values in excess of 50% should be achievable. Lower values indicate that the question is not nearly as effective at discriminating between students of different ability as it might be and therefore is not a particularly good question.

4.3 Underlying calculations

The mathematical calculations carried out are specified on the Moodle Docs site Moodle Statistics calculations.